The ESG Technical Validation report is out now and confirms our data on the benefits of deploying Riverbed Application Acceleration with SD-WAN performance. A link to the detailed report is available below, but first let’s cover the basics of SD-WAN and why its performance needs optimization.

SD-WAN’s impact

SD-WAN has become an ubiquitous technology. Today, SD-WAN has mostly replaced traditional wide area network infrastructure. It helps improve WAN fault tolerance, makes cloud connectivity easier, and addresses the difficulty in managing geographically spread-out network devices. However, though SD-WAN excels in these areas, it falls short in alleviating performance issues, and this is where application acceleration shines.

The benefits and shortfalls of SD-WAN

SD-WAN is very effective at monitoring link performance and determining the best paths for a specific application. This capability was a great step forward in wide area networking a few years ago, and today it goes without saying that a branch would have two or possibly three WAN connections for fault tolerance and path diversity. Typically, these would be lower cost connections such as broadband or locally available fiber.

An SD-WAN controller uses various methods to monitor link performance and make automatic decisions based on policies and thresholds regarding which path a specific application should use. For example, if an application requires no more than 150ms of jitter, and a particular link reports more than 150ms of jitter, the SD-WAN can dynamically swing traffic to another path. However, paths selected by SD-WAN can adversely affect latency and therefore application performance.

Application acceleration addresses the underlying WAN related TCP behavior which typically adversely affects application performance. Therefore, these technologies, when working together, provide the most robust and performant application delivery method possible.

Latency and server turns

Beyond a hard-down or significant packet loss the biggest threat to an application’s performance, and therefore the end-user experience, is latency. In basic terms, latency is, the amount of time it takes for a packet to go from a source to its destination. The time it takes depends on a variety of factors, driven by the path traveled by packets. So, improving latency, or in other words, decreasing the amount of time it takes to go from source to destination, is not something that can be done by adding bandwidth.

Packets traverse a network close to the speed of light, but they often get held up by security devices inspecting that traffic for threats or router queues as a result of traffic shaping. This applies to any endpoint, whether it’s an end-user at home, a server in a private data center, a virtual server instance in public cloud, or even an application delivered by a SaaS provider.

What is worse is that applications poorly using TCP and causing even a 1 millisecond increase in one-way latency has been known to debilitate certain applications. Therefore, it is crucial that alongside excellent WAN resiliency with SD-WAN, we must still solve for latency.

Application Acceleration and bandwidth

If you had unlimited bandwidth, would that provide better experience for your users? The answer as in most cases, depends on the situation. However, in demanding situations such as heavy and long file transfer sessions, Application Acceleration can make your application WAN link behave almost like a LAN. So, when it is most needed, our Riverbed customers have been utilizing our acceleration solutions in various form factors—branch to branch, branch to data center, data center to data center, and SaaS to end-users.

Better together, quantitatively

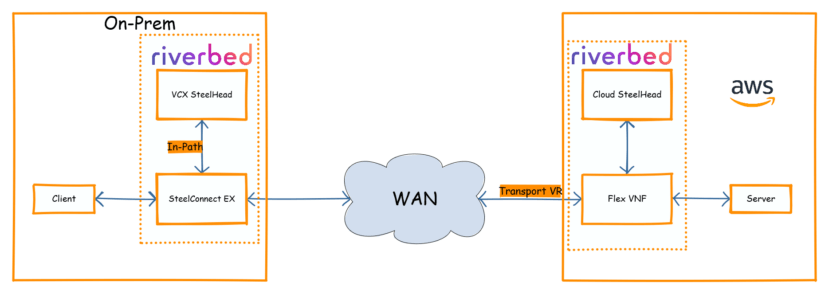

With a setup like the one below, one end in AWS utilizing our Flex VNF SD-WAN solution and the other on-prem using our SteelConnect EX appliance, we have realized performance benefits particularly for bulky file transfers.

In our performance testing of various large file sizes, we have achieved up to six times improvement in file transfer times. The typical benefits we usually achieve with WAN Optimization independent of SD-WAN deployments are also available when SD-WAN and WAN-OPT are service chained together.

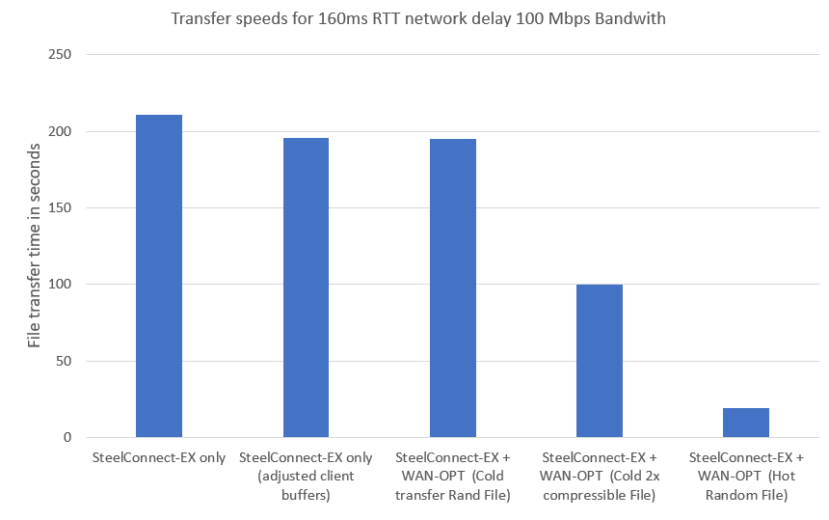

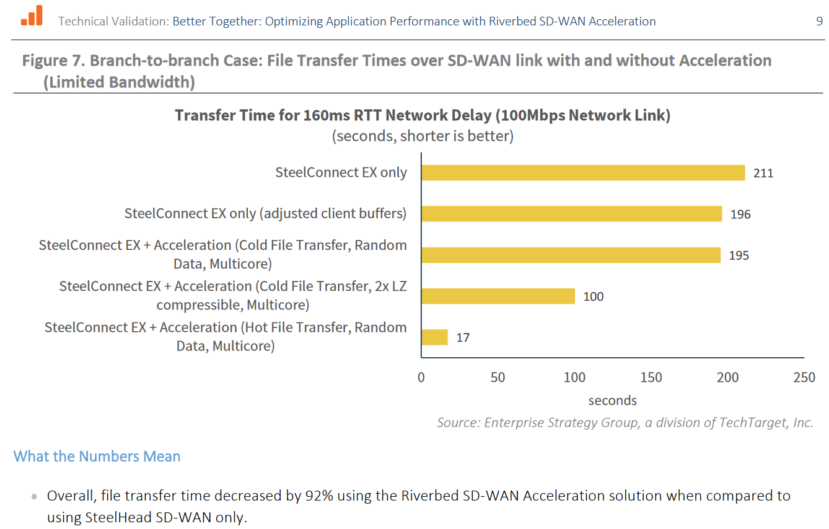

Looking closely at the graph below, starting with the the left most bar “SteelConnect-Ex only” indicates the time taken for a large file (non-compressible binary) transferred over 100Mbps over a 160ms without any optimization. Next, as a test end machines’ TCP buffers were adjusted to match bandwidth delay product a bit better, we can see some improvements. Next on the graph, WAN-OPT is enabled using SteelHead but not much has improved in terms of file transfer times just yet. However, a compressible version (let’s say plain text file) of the same size would see a 2x speed improvement immediately in cold (cache miss) transfer as can be seen for the “Cold 2x compressible File” results. And finally, for “Hot Random File,” the right most bar on the chart, a second-pass of the same non-compressible large file would produce close to 10x file transfer time speed up or a 92% reduction in time compared to the first pass regardless of which large file was used.

These gains have been confirmed by ESGs testing.

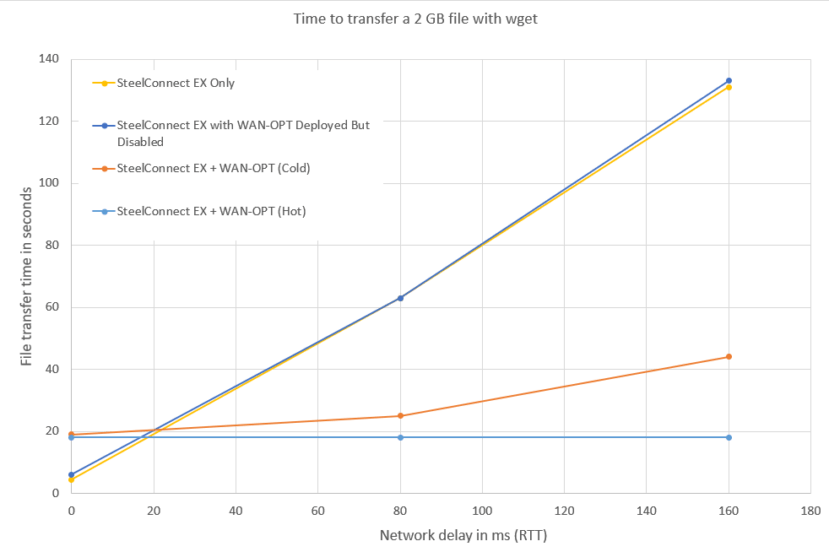

As a further detail, it is important to note that the results of testing with increasing network RTT do correlate with intuition, without WAN-OPT increasing latency almost parabolically related to longer file transfer times. The bottom two lines are hot and cold transfer times for the 2GB file across various latency values. The top two lines are without WAN-OPT. The difference WAN-OPT made in time saved is quite staggering.

Conclusion

Application acceleration specifically solves the problem of latency using a variety of methods aimed at reducing round-trip time and eliminating the effects of server turns, thereby improving an application’s performance. It also reduces congestion on a link by eliminating unnecessary traffic, caching certain information locally so it doesn’t need to traverse the network multiple times or at all, and optimizing TCP Windows and buffers so end users don’t have to perform advanced TCP tuning. These advantages combined with SD-WAN resiliency provide the best of both worlds for our customers.