One thing that’s certain today is that network security is a moving target. As attackers become more sophisticated there is a need to adjust the protocols we use and offer better data protection for end-to-end communication. This is true in the case of TLS. It’s long been a practice of many vendor products to use the method of loading the server private key on a network device in-path so that the device can decrypt the payload in transit, do whatever it needs to, and re-encrypt and send it along its way. Most security organizations don’t recommend this practice, however, it’s interesting to note that many security vendors themselves use this method to provide IPS/IDS and other functionality.

So, what adjustments have been made in TLS to improve overall security? In previous versions of TLS, up to TLS 1.2, Perfect Forward Secrecy (PFS), also known as forward secrecy, is optional, not mandatory. In TLS 1.3, PFS becomes a mandatory function of the protocol and must be used in all sessions. This is significant because PFS negates the ability to load the server private keys on the in-path devices to perform decryption. Before getting too far along, let’s cover a few TLS points from a high level.

What is TLS?

For most who find this article, you’ll probably be familiar with TLS. TLS stands for Transport Layer Security. It’s a protocol that sits behind the scenes and often doesn’t get the credit for the work it does. When you navigate to a secure website and the URL has “https” at the beginning, it’s TLS that gives you the “s.” In fact, some may even refer to it as an SSL (Security Socket Layer) connection, but it’s been TLS for quite some time now. The idea behind TLS is that it provides a secure channel between two peers. The secure channel provides three essential elements:

- Authentication

- Confidentiality

- Integrity

Authentication happens per direction. The server side is always authenticated. The client-side is optional. This happens via asymmetric crypto algorithms like RSA or ECDSA but that’s beyond the scope of this article. Confidentiality is another way of saying “encrypted.” The Integrity portion of TLS is used to ensure that data can’t be modified.

There are several major differences between TLS 1.2 and TLS 1.3, namely that Static RSA and Diffie-Hellman cipher suites have been removed in TLS 1.3 and now all public-key exchange mechanisms provide forward secrecy. This begs the question, “What is PFS?”

PFS is a specific key agreement protocol that ensures your session keys aren’t compromised even if the server’s private key is. Each time a set of peers communicate using PFS, a unique session key is generated. This happens for every session that a user initiates. The session key is used to decrypt traffic.

The way it works without PFS is that during session establishment, a Pre-Master Secret is generated by the client using the server’s public key. It is then sent to the server and the server can decrypt it using its private key. From there each side generates a symmetric session key known as the Master Secret. This is used to encrypt data during the session. If an attacker gets the server private-key, then it can also generate the Master Secret, meaning it can then decrypt any traffic from that session onwards.

To oversimplify the function of PFS, the client and server use Diffie-Hellman to generate and exchange new session keys with each session. Make sense from a security perspective? It should. PFS makes it much more difficult to get at user traffic and that’s the goal. But what does that mean to enterprise IT?

TLS, PFS, and the Impact on Enterprise IT

With the idea of PFS in mind, what’s the impact on Enterprise IT? First off, the task of not only securing traffic within an enterprise but also providing the required performance and monitoring of said traffic puts things in a bit of a grey area. We don’t want to let attackers get to the data so we protect it, but we also need to see the traffic to monitor it and accelerate it. So, what is an enterprise to do?

Traditionally, we’ve used a proxy to rip open the packets, do something to the packets, pack them back together and securely forward them. If you’ve worked with firewalls and IPS devices, then this isn’t a new concept. In fact, it’s something that is quite common in today’s networks. Considering the idea of performance and security monitoring, the process is no different.

Still, even though security departments make use of this traditional method of exposing encrypted data, it often comes with pushback from IT security departments when the request to do so comes from the infrastructure and monitoring teams. A visibility platform should be able to employ the same tricks as our security products (Riverbed NPM solutions do for what it’s worth), and up until TLS 1.3 came about they could.

But now we have a new hurdle to tackle. TLS 1.3 is making its way onto the scene and the traditional methods of exposing the data are no longer an option. In fact, since PFS is optional in TLS 1.2, if it’s used, current NPM solutions will have problems even prior to TLS 1.3 being rolled out. Why? Because the use of specific keys are only used for limited data transmission sessions. This makes packet inspection very challenging.

Addressing the Challenge with AppResponse

Despite the challenges that we face with advancements in security protocols, here at Riverbed we have continued to look for new ways to overcome these challenges. Before AppResponse 11.9, we supported RSA Key Exchange. In this scenario, private keys get uploaded to decrypt the traffic, the pre-master secret is transmitted, and we decrypt it using the provided private key. This is pretty much how any vendor would do it.

However, in some cases with TLS 1.2 and down the road with TLS 1.3 this is no longer a possibility. Therefore, we’ve started to make significant changes to how we handle the decryption of packets. With our feature enhancements we can still provide deep visibility and performance monitoring for today’s IT organizations while maintaining the level of security that TLS 1.2 provides. How can we do this?

The PFS API

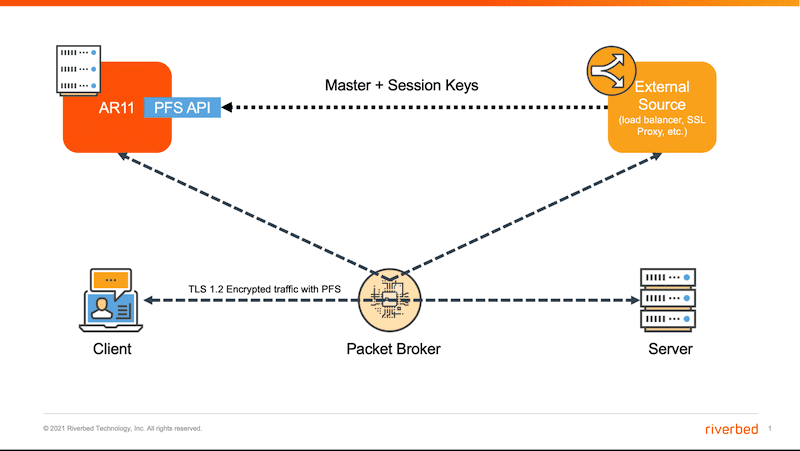

When a Diffie-Hellman key exchange is used, a unique master secret is used for each session. Using integration partners, we can retrieve the required keys, giving us the ability to decrypt and inspect the traffic. A new PFS API in AppResponse, communicates with external sources and retrieves the Master and Session Keys. These external sources could be a load balancer or an SSL Proxy. I won’t go into the details here, but it solves the problem in an elegant way. A glimpse of the functionality is seen in the image below. Here AppResponse has a PFS API that is always on. Master and Session keys are retrieved from an external source, which could be a load balancer or SSL Proxy.

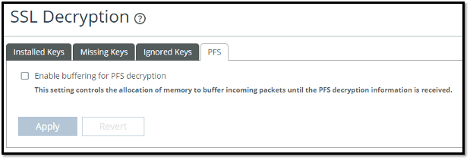

As the traffic is being sent to AppResponse, you might want to buffer it until the keys are received. If not, you’re going to lose visibility into those packets. For this reason, there’s a new feature that you can toggle on as seen below.

PFS processing in AppResponse may require a lot of data to be buffered while waiting for the key. For this reason, buffering is not enabled by default even if SSL encryption is enabled. Finally, if you disable PFS then all the keys received by the REST API will be discarded.

Wrap-up

It’s evident that the world is changing. When it changes in the name of security, enterprises can’t compromise visibility. Riverbed understands this and continues to provide innovative ways to address these unique challenges. To take a detailed look at Riverbed AppResponse and the capabilities discussed in this blog, watch our webcast Breakthrough Visibility & Performance for Encrypted Apps & Traffic and let your packets, whether sent in plain text or encrypted with TLS, start providing actionable answers to what’s happening in your network.