Why Model Accuracy Isn’t the Only Metric That Matters in AI

Learn how AI Assurance addresses the observability gaps accuracy can’t.

For most organizations, early AI conversations revolve around one question: Is the model accurate?

It is a reasonable place to start. Accuracy shows whether a model produces correct responses and helps data science teams validate training approaches, compare architectures, and improve results.

But accuracy alone says very little about how AI behaves once it leaves the lab and enters the enterprise.

When AI becomes part of real workflows, supporting employees, serving customers, or informing business decisions, accuracy alone is no longer sufficient.

Accuracy Is Necessary, But It’s Not Operational Intelligence

An AI model can be accurate and still fail the business.

Accuracy does not capture:

- Latency: A correct response that arrives too late degrades user experience just as surely as an incorrect one.

- Cost: Inference pricing, token consumption, and compute usage rarely appear in accuracy metrics, but they surface quickly on a bill.

- Adoption lag: A model can be accurate and still unused. Accuracy does not indicate whether AI is trusted, adopted, or delivering value.

- Dependency impact: Many AI agents sit inside workflows, triggering actions or passing outputs downstream. When something breaks, failures often propagate silently.

In short, accuracy measures what an AI produces. It says very little about how the system behaves.

The Real Challenges of AI in Production

At runtime, AI is subject to the same pressures as any other production technology. Performance fluctuates. Costs vary. Usage ebbs and flows.

This is where organizations encounter the same operational challenges they face with any application:

- Why does response time degrade during peak usage?

- Which AI use cases are delivering value?

- Where is adoption lagging across the organization?

- Who is using Shadow AI?

- How do we detect when AI‑driven workflows drift from expected behavior?

These are not data science questions. They are operational ones.

As AI becomes business‑critical, it must be managed with the same discipline as other applications. AI should be no exception.

AI Operational Assurance

Many organizations lack the visibility required to operate AI confidently in production. They often have limited insight into performance, cost efficiency, adoption, and risk.

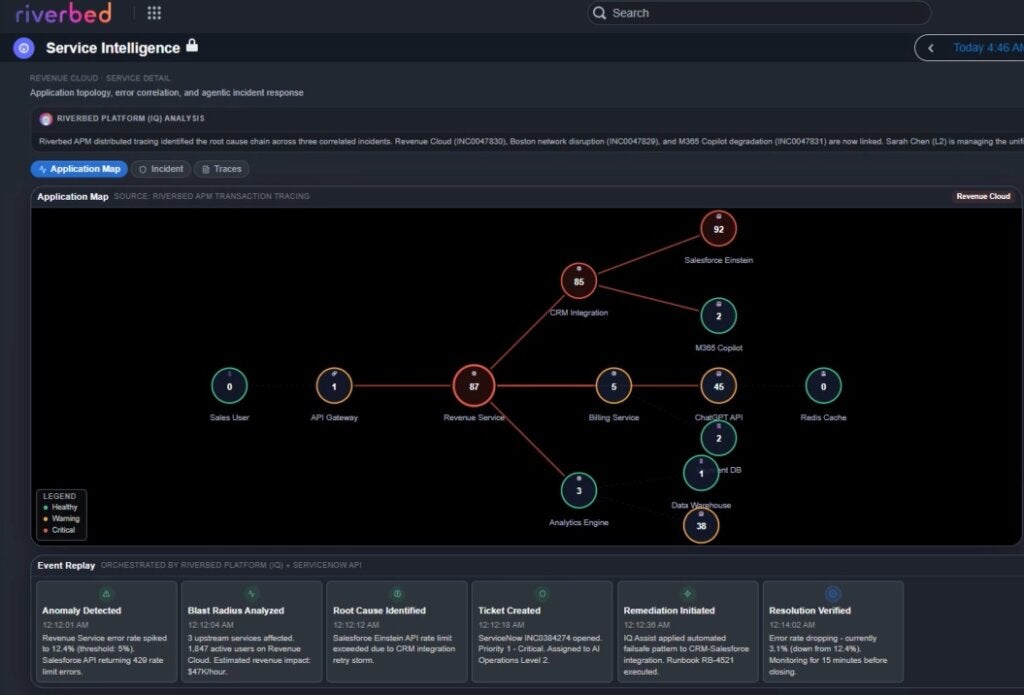

Riverbed AI Assurance addresses this gap by providing operational visibility into how AI behaves in real environments. It helps organizations answer questions such as:

- Is AI delivering consistent performance as usage scales?

- Are inference costs aligned with business value?

- Is AI being used across the organization?

By connecting AI behavior to operational and business outcomes, AI Assurance moves AI out of isolated experimentation and into a governable part of the enterprise stack.

Accuracy Is the Starting Line, Not the Finish

Model accuracy will always matter. But as AI becomes embedded in daily operations, organizations must look beyond correctness to understand performance, cost, adoption, and impact. Success is not solely defined by whether AI produces the right answer; it’s also defined by whether the system performs reliably, scales sustainably, and delivers expected value.

Riverbed AI Assurance provides the framework to move AI from experimentation to a system organizations can trust and scale.